Introduction: A Healthcare Revolution or Hype?

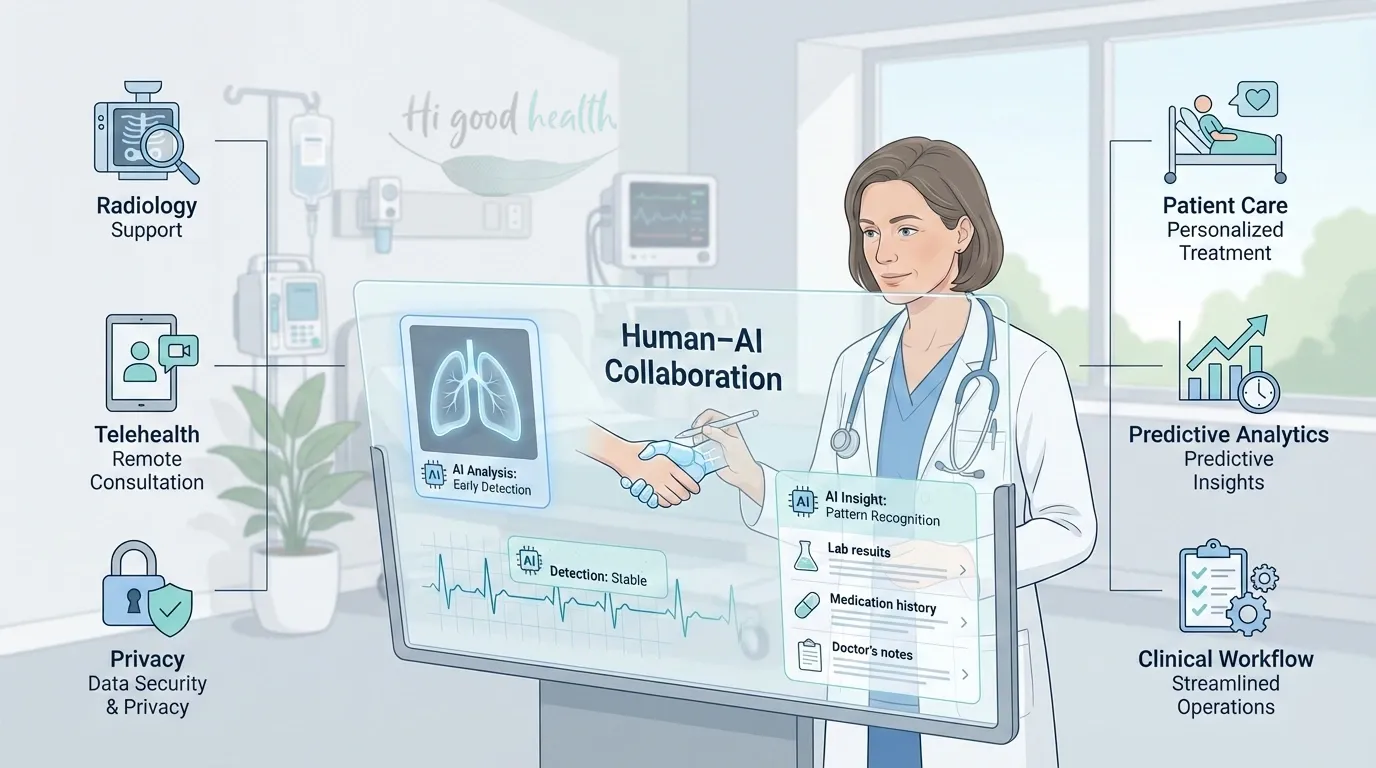

In 2026, artificial intelligence (AI) is no longer a futuristic concept in American healthcare — it’s here, shaping everything from hospital workflows to personal wellness apps. Algorithms now help radiologists detect cancer, predict cardiac events, and even draft patient records. Telehealth platforms deploy AI-powered chatbots to triage patients before they ever speak with a doctor.

Supporters call AI the most transformative leap in medicine since antibiotics, promising faster, more accurate, and more affordable care. Critics warn of bias, privacy breaches, and an erosion of the human touch in healing. For patients, the stakes are high: AI could save lives — or put them at risk if unchecked.

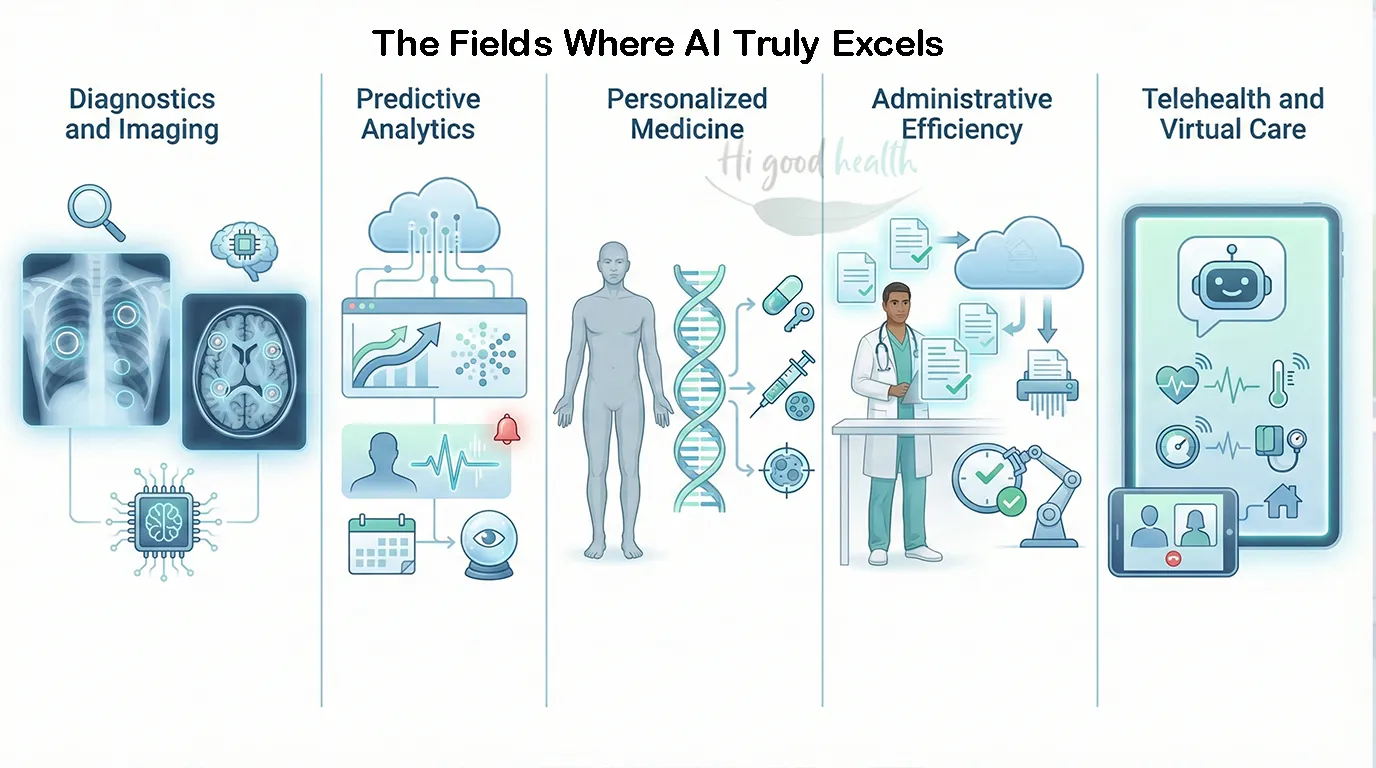

Where AI Shows Real Promise

1. Diagnostics & Imaging

- Radiology: AI tools analyze X-rays, CT scans, and MRIs for tumors, fractures, and lung disease.

- FDA approvals: Dozens of AI-powered diagnostic tools are cleared for US use.

- Accuracy: Some outperform radiologists in detecting breast cancer and lung nodules.

2. Predictive Analytics

- AI models crunch data from EHRs (electronic health records) to flag patients at risk of heart failure, sepsis, or readmission.

- Hospitals use predictive dashboards to allocate resources and prevent ER overcrowding.

3. Personalized Medicine

- AI tailors treatments based on genetic profiles.

- Oncology uses machine learning to match patients with the most effective targeted therapies.

4. Administrative Efficiency

- AI reduces paperwork by generating visit summaries and insurance codes.

- Doctors reclaim time for patients, reducing burnout.

5. Telehealth & Virtual Care

- AI chatbots triage symptoms before a telemedicine consult.

- Remote monitoring tools alert clinicians when vitals deviate.

Case Studies: Americans & AI-Driven Care

Case 1: Lisa, 48, California

Her mammogram flagged “normal,” but an AI tool detected subtle anomalies. A biopsy confirmed early-stage breast cancer, caught months earlier than a human eye alone might have. Treatment was successful.

Case 2: James, 62, Florida

With congestive heart failure, James wore a patch that transmitted real-time data. AI algorithms predicted a potential flare-up and alerted his doctor, preventing hospitalization.

Case 3: Maria, 35, New York

When Maria logged into her insurer’s app for abdominal pain, an AI chatbot suggested “acid reflux.” But her condition worsened. At the ER, she was diagnosed with appendicitis. The missed triage nearly cost her life.

The Pitfalls Patients Face

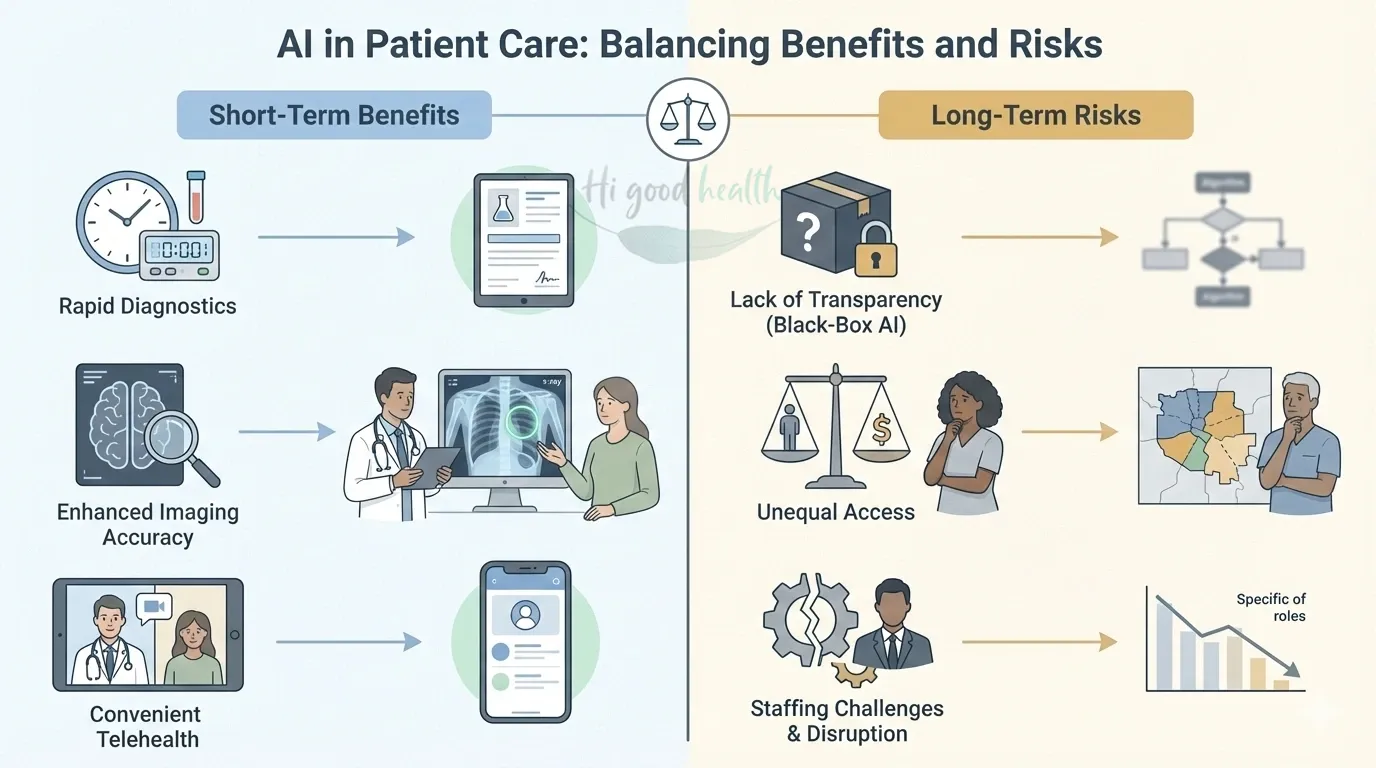

1. Bias & Inequity

- AI trained on biased datasets may misdiagnose minorities.

- Example: Algorithms predicting kidney function often underestimate disease in Black patients, delaying treatment.

2. Privacy Concerns

- AI needs massive data sets — raising risks of data leaks and misuse.

- US breaches like the 2023 HCA Healthcare hack exposed millions of patient records.

3. Overreliance on Algorithms

- Risk of “automation bias,” where doctors trust AI even when it’s wrong.

- Patients may not know when AI is behind their diagnosis.

4. Lack of Regulation

- The FDA has cleared dozens of AI tools, but oversight struggles to keep pace.

- No national standards exist for testing AI across diverse populations.

5. Erosion of Human Touch

- Patients value empathy, nuance, and trust — something AI can’t replicate.

- Overuse of chatbots risks alienating vulnerable groups like seniors.

The Economics of AI in US Healthcare

- Cost savings: AI could reduce US healthcare spending by $150 billion annually by 2030 (Accenture estimate).

- Investment: US healthcare AI market projected at $50 billion by 2030.

- Patient bills: Some AI-enabled diagnostics cost less, but advanced AI-driven therapies can be expensive.

💡 Concern: If insurers and hospitals adopt AI mainly for profit, patients may see savings evaporate.

The Science: How AI Actually Works

- Machine Learning (ML): Algorithms “learn” from patterns in data (e.g., spotting tumors).

- Natural Language Processing (NLP): Extracts insights from doctors’ notes and patient charts.

- Generative AI: Summarizes records, drafts letters, or answers patient questions.

- Limitations: AI requires high-quality data — garbage in, garbage out.

Short- vs Long-Term Impacts for Patients

Short-Term Benefits:

- Faster test results.

- Fewer missed diagnoses in radiology.

- Easier access to virtual care.

Long-Term Risks:

- Overdependence on black-box systems patients don’t understand.

- Widening health gaps if AI doesn’t serve diverse groups equally.

- Possible job displacement of healthcare workers.

Step-by-Step: How Patients Can Protect Themselves in the Age of AI

- Ask Questions

- Was AI used in my diagnosis?

- How is my data protected?

- Know Your Rights

- Under HIPAA, patients must be informed if data is shared.

- Some states (e.g., California) have stricter AI/data privacy laws.

- Balance Convenience with Caution

- Telehealth AI tools are useful, but red-flag persistent or worsening symptoms.

- Support Transparency

- Patients can advocate for hospitals that disclose AI use and publish performance data.

- Stay Human-Centered

- Use AI as a tool, not a replacement for doctor-patient relationships.

What Experts Say

- American Medical Association (AMA): Warns that “equity and transparency must guide AI adoption.”

- FDA (2024): Acknowledges gaps in regulation, pledges “continuous oversight” of adaptive AI tools.

- World Health Organization: Calls for “responsible AI” that prioritizes patient safety and fairness.

If you prefer a more visual version, check out our YouTube video here:

Expanded FAQs

- Will AI replace my doctor?

No. AI handles tasks like paperwork and scan analysis, but it cannot replace human empathy or judgment. Experts emphasize that AI should be a tool to assist doctors, not replace the patient-doctor relationship. - Can AI detect cancer earlier than humans?

Yes. AI tools for analyzing X-rays and CT scans have outperformed radiologists in detecting breast cancer and lung nodules. These tools are most effective when helping doctors catch issues they might miss. - Is my health data safe if my doctor uses AI?

Not always. AI systems require massive amounts of data, increasing the risk of privacy breaches and leaks. While laws like HIPAA exist, hacks can still expose millions of patient records. - Can AI make mistakes in diagnosis?

Yes. AI is not perfect and has misdiagnosed serious conditions, such as mistaking appendicitis for acid reflux. There is also a risk that doctors may trust AI too much, even when it is wrong. - Is medical AI biased against certain groups?

It can be. If algorithms are trained on biased data, they may misdiagnose minorities. For example, some models have underestimated kidney disease severity in Black patients, leading to delayed care. - Will using AI lower my medical bills?

Not necessarily. While AI could save the healthcare industry billions, those savings may not reach patients. Some AI-driven diagnostics may cost less, but advanced therapies can remain expensive. - What should I do if an AI chatbot diagnosis feels wrong?

Trust your body. If an AI tool suggests your symptoms are mild but you feel worse, do not rely on it. Always seek a human doctor for persistent or worsening symptoms.

Final Thoughts

AI is transforming American healthcare, offering unprecedented opportunities to catch disease earlier, streamline care, and personalize treatment. But the risks are real: bias, privacy threats, and a loss of human-centered care.

For patients in 2026, the wisest approach is balance — embrace AI’s promise, but demand transparency, equity, and safeguards. The future of healthcare should be tech-assisted, not tech-dominated.

Glossary

- AI (Artificial Intelligence): Computer systems mimicking human decision-making.

- ML (Machine Learning): Algorithms that learn from data patterns.

- NLP (Natural Language Processing): AI analyzing text, such as medical notes.

- HIPAA: US law protecting patient health information.

- Automation Bias: Overreliance on computer outputs, ignoring human judgment.